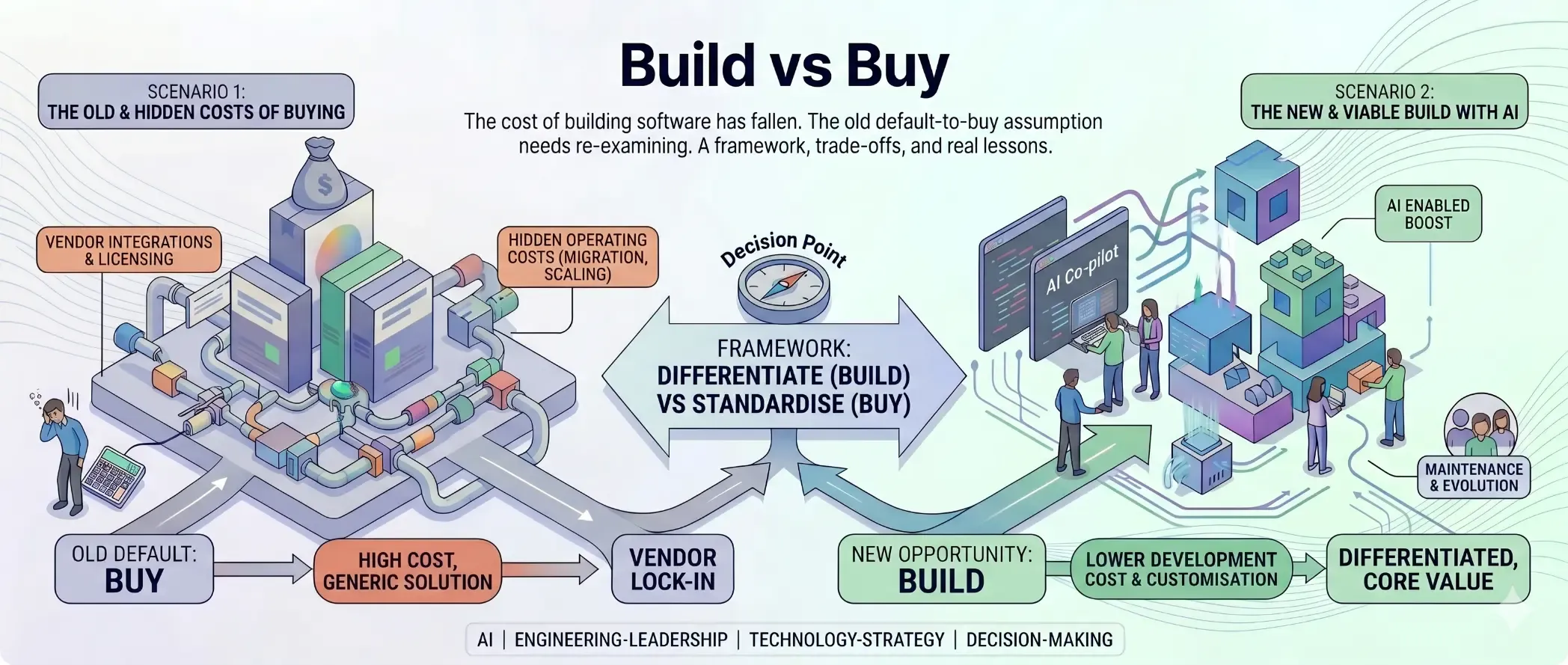

The build vs buy rule most engineering leaders follow was written in a different era. It was written when a developer cost $150,000 a year, when building a feature took months, when a small team couldn’t reasonably maintain a custom system alongside everything else they were responsible for. The economics of that era made “buy” the sensible default. Vendors had scale, specialist knowledge, and the capacity to invest in product development that most organisations couldn’t match. SaaS commoditised everything, and building felt increasingly like an act of hubris. Why write your own CRM when Salesforce has a thousand engineers improving theirs?

That logic still holds in some contexts. But AI has changed the cost of building software enough that defaulting to buy, without re-examining the case, is now its own form of risk.

The Old Equation

The “buy” default emerged from real constraints. Building was expensive, slow, and created ongoing maintenance debt. Vendors spread that cost across customers: a licence got you a working system, a support team, and someone else’s compliance programme. SaaS deepened the logic. By the mid-2010s there was a vendor for almost everything, and the pressure to standardise pointed toward buying. That was the right call, for that era.

What AI Has Changed

The cost of building software is collapsing.

Not metaphorically. Literally. Work that previously required a senior engineer spending weeks is now done in days. Prototypes that once took months to reach a demo-able state are being produced in sprints. An experienced developer working with AI tooling can produce, review, and iterate on code at a pace that wasn’t possible three years ago.

The “we don’t have capacity to build this” objection, which was often the real reason organisations bought rather than built, is weakening. Teams that once lacked the bandwidth to build and maintain a custom integration now have genuine options. The economics of build have moved.

That shift triggered a moment of genuine hyperbole. As tools like Claude made autonomous coding increasingly viable, a wave of commentary called it the end of SaaS. The “SaaSpocalypse” narrative emerged: if AI could produce working software in hours, why would any organisation pay recurring licence fees indefinitely? Build your own version, own it outright, pay nothing ongoing. The argument looked compelling on the surface, and the pace of AI development made it feel urgent.

That conversation has since quieted, for good reason. Building software and running software are different problems. A prototype that works in a demo is not a production system. Production means uptime, security patching, incident response, database maintenance, user support, and the steady accumulation of edge cases that no initial build anticipates. Vendors carry those costs invisibly inside the licence fee. When you build, they become yours. The SaaSpocalypse didn’t arrive because the economics of running software didn’t change — only the economics of writing it did.

This matters most for the total cost of ownership argument. Historically, build advocates would win the initial cost comparison but lose on the long-term maintenance and evolution costs. A custom system needed ongoing engineering investment that compounded over time. That assumption is now less reliable. An AI-augmented team of two or three engineers can maintain and evolve a custom build that would previously have required a team twice the size. The ongoing cost of building, not just the upfront cost, has fallen.

This doesn’t mean buy is wrong. It means the threshold has shifted. Decisions that tilted toward buy three years ago, on the basis of capacity or maintenance cost, should be re-examined under the current economics.

A Framework: Differentiate vs Standardise

Is this a capability you need to differentiate on, or one you need to standardise? That question does more work than the build vs buy framing it replaces.

The practical framework:

Lean toward build when:

- The capability is unique to your organisation

- It is core to the business or can be positioned as competitive advantage

- Heavy integration needs make a vendor solution unwieldy

- Long-term TCO under a vendor is unfavourable

- You have, or can build, in-house capability

Lean toward buy when:

- The capability is commoditised and you need to standardise

- The vendor brings compliance and security coverage you’d otherwise need to build

- You want to leverage established workflows and industry conventions

- Speed to market is critical and in-house depth doesn’t exist

- The risk profile is better managed through a vendor relationship

A Customer Engagement Platform (CEP) is a useful example of when buying makes sense. It’s a standardised, well-understood category. Vendors bring years of workflow refinement, deliverability infrastructure, compliance frameworks, and integrations. Unless your engagement model is genuinely differentiated, building a CEP from scratch diverts engineering effort from your actual competitive advantage. You’re standardising, not differentiating. Buy.

In practice, the cleanest decisions are rarely pure build or pure buy. Most organisations do both: buy a platform for the commoditised layer, build on top of it where the work is differentiating. The question isn’t which one. It’s where the boundary sits.

The Hidden Cost of Buying

The decision usually looks cleaner than it is because the full cost of buying is rarely presented honestly in a business case.

The headline licence cost is one number. It’s usually the number that gets approved. But the actual cost of a vendor solution runs considerably higher, across two categories that rarely appear in the initial proposal: upfront costs and sunset costs.

| Hidden Cost Category | Type | Description |

|---|---|---|

| Consulting | Once-off | External expertise if in-house capability doesn’t exist |

| Initialisation | Once-off | Getting the system to a usable baseline state |

| Configuration / Setup | Once-off | Tailoring the product to your workflows and requirements |

| Migration | Once-off | Moving from an existing system or data model into the new platform |

| Integration Build | Once-off | Connecting the vendor to your existing stack (APIs, ETL pipelines, identity providers); frequently scoped separately and underestimated |

| Change Management | Once-off | The people cost of getting the organisation to actually adopt the new system; often skipped, frequently the reason implementations fail |

| Productivity Dip | Once-off | Staff carry the weight of the old system winding down and the new one spinning up at the same time, while still doing their day jobs. Productivity suffers in the interim. |

| Training | Recurring | Onboarding your team and users to the new system |

| Licence Escalation | Recurring | Annual price increases, per-seat growth, and tier upgrades as usage scales; rarely matches the original contract rate over a 3–5 year horizon |

| Support Tiers | Recurring | Base plans often exclude priority support, SLA guarantees, or dedicated account management; enterprise support costs extra |

| Administration | Recurring | Maintaining the system as your business changes: new workflows, user types, and integrations; not a one-time cost |

| Integration Maintenance | Recurring | Vendor API changes and deprecations break your integrations over time; someone has to keep them alive |

| Sunset / Exit | Once-off | Extracting data, migrating away, and decommissioning when you eventually leave |

These costs are not hypothetical. They appear in almost every significant vendor implementation, sometimes anticipated, often not. Configuration costs described as “minimal” in the sales process turn into multi-month professional services engagements. Data migration surfaces data quality problems no one budgeted for. Training is perpetual because staff turn over. The sunset cost, invisible when you sign, is one of the most reliably underestimated costs in technology delivery.

What these costs add up to is commitment. The moment you sign, the migration begins, the training starts, the integrations get built. By the time the system is live, the organisation has invested enough that leaving is genuinely painful. Vendor lock-in is not a contractual clause. It is the accumulated weight of decisions made during implementation.

That commitment is not necessarily a bad thing. If the vendor uplifts your capability through better workflows, compliance coverage you couldn’t build yourself, or access to R&D you’d never fund internally, the commitment is justified. You are trading flexibility for capability, and that trade can be worth it.

The right lens is investment thinking. What is the total cost over the full term, and what does the organisation get in return, over what period? A vendor that costs twice as much but halves time to market or eliminates a compliance risk you’d otherwise carry indefinitely can be the right call. A vendor that solves 80% of the problem at full price, with a painful exit built in, is a different calculation. Run the TCO. Estimate the payback period. None of this disqualifies buying. But make the commitment with eyes open.

Build vs Buy: The Trade-offs

For reference, the honest comparison:

| Build | Buy | |

|---|---|---|

| Pros | Flexible and customisable to your exact needs | Lower upfront cost |

| Can become a genuine competitive advantage | Faster time to value — deploy in days or weeks, not sprints | |

| Fully owned — no licence fees | Follows established workflows and conventions | |

| Architecture decisions are yours | Vendor support when things go wrong | |

| Easier stakeholder buy-in (familiar, utilitarian) | ||

| No technical maintenance burden | ||

| Risk can be partially outsourced to vendor | ||

| Continuous R&D — product improves without your engineering effort | ||

| Pre-built compliance certifications (SOC 2, ISO 27001, GDPR tooling) | ||

| Broader ecosystem — integrations, marketplaces, community plugins | ||

| Cons | Higher startup cost | Inflexible — you follow the vendor’s model |

| Requires ongoing maintenance and upkeep | Vendor lock-in, including data sovereignty and portability risk | |

| Infrastructure and architectural decisions add complexity | Feature queue dependency — roadmap decisions are made for thousands of customers, not you | |

| When things break, your team owns it | AI-era obsolescence risk — pre-AI vendor architecture may not adapt as AI-native alternatives emerge | |

| AI-assisted development can create false confidence — code that looks complete but misses structural concerns (security, authentication, data integrity) | Integration engineering cost — connecting vendor APIs to your stack is its own hidden burden | |

| The “good enough” trap — vendors solve 80%; the last 20% is often where your differentiation lives |

In Practice: Football Australia

Football Australia runs digital systems supporting over two million records across the game. Players, referees, coaches, administrators, and fans across multiple competitions. The technology reflects decades of organic growth: systems built for narrower purposes than they now serve, databases that grew in silos, and monolithic platforms carrying more complexity than their original purpose required. It all works, but it is harder to change than it should be.

We are working through a digital transformation with a lean in-house team. The strategy is hybrid: build what is core, buy where standardisation makes sense.

On the buy side, we implemented a Customer Engagement Platform. The rationale was straightforward: get an immediate uplift to compliance, reporting, and audience targeting capabilities that were either missing or underpowered, without building from scratch. The goal was not to differentiate on customer communications. It was to reach a competent baseline quickly.

On the build side, the work sits where flexibility matters most. A single person can be a registered player in one state, a referee in another, an administrator in a third, and a fan at the same time. No vendor platform models that cleanly. Re-architecting the data layer to support a single unified identity across all of those contexts is work that has to be built. It needs deep knowledge of the data model, membership logic, and the workflows connecting registration systems to communication platforms. A small team is doing this in-house, using AI-assisted development tooling that makes the pace workable.

The work is ongoing. It shows the hybrid approach working in practice: buy the platform layer where the category is mature, build the identity and data layer where the requirement is specific to the organisation. Both are the right call, for different reasons.

In Hindsight: NSW Department of Education

The NSW Department of Education’s school infrastructure programme spans 2,200 schools statewide. One of the largest public infrastructure portfolios in Australia. Hundreds of concurrent projects running at any given time, each with its own budget, milestones, contractors, and reporting obligations.

We built a bespoke programme management system to manage delivery across that portfolio. A layer that sat above individual projects and gave the programme visibility across all of them. That was the right call. The mistake was scope. Instead of keeping the system focused on what the programme actually needed (overview, reporting, recording decisions, monitoring budget) we built down into controlling the detail of individual projects. That level of project management is a solved problem. Established platforms handle it well.

The better approach would have been to keep the programme layer focused on portfolio oversight and let individual projects run on an established project management platform underneath. Pull data up from those systems for reporting and control. Build the overview. Buy the detail.

This is the inverse of the Football Australia example. Where FA recognised early that the core identity problem had to be built, the NSW DoE experience shows what happens when you build too far past the line. The programme management layer was right. Going deeper was not.

A Word of Caution on AI-Assisted Builds

The collapse in build cost comes with a new failure mode that engineering leaders need to understand.

An inexperienced team uses AI to build a bespoke integration. Every business requirement is met: the right data flows, the right screens exist, the right outputs are produced. The code looks competent. It passes UAT because testers validated business behaviour, data in, correct output out.

But the API endpoints have no authentication. Any caller can invoke them.

The gap wasn’t in the requirements. It wasn’t in the logic. No one asked for authentication, so the AI didn’t include it. The developers didn’t know to look for it. The testers weren’t testing for it.

This is the new failure mode of AI-assisted development: code that is functionally correct but structurally unsound, produced by developers who lacked the experience to know what to ask for, or to recognise what was missing. AI lowers the floor for what a small team can build. It doesn’t raise the ceiling on their judgement.

If you’re leading AI-assisted builds with teams that are earlier in their development, you need explicit review practices that cover what the AI won’t surface: security posture, authentication, authorisation, data integrity, failure modes. The business requirements won’t tell you to check these things. That’s the job of experienced engineering leadership.

What This Means for Engineering Leaders

The first implication is operational: revisit decisions made under old assumptions. Vendor contracts signed three to five years ago, on the basis that building wasn’t viable, should be re-examined at renewal time. The economics have moved. Some of those decisions would land differently today.

The second implication is capability: even if you end up buying, you need the ability to evaluate intelligently. That means being able to prototype, to build proof-of-concepts, to assess what a vendor solution actually does and doesn’t do against your requirements. Teams that can’t build anything can’t evaluate purchases either. The capability to build, even at a modest scale, makes you a better buyer.

The third implication is risk framing: the “buy trap” is as real as the “build trap.” Vendor dependency, pricing power shifts, roadmap misalignment, exit costs — these are organisational risks that compound quietly over time. The organisations most exposed are the ones that bought deeply into vendor stacks for core capabilities, without a viable exit path. That risk was always present; it’s more visible now that alternatives are more realistic.

And the fourth is the quality bar for AI-assisted builds: AI raises output volume without automatically raising output quality. The engineering judgement required to assess security, architecture, and structural soundness doesn’t get automated. If you’re building more, and you now can, you need experienced oversight to match.

Closing

The equation has changed. Not in a way that makes building the obvious default; context still determines the right call. But in a way that makes the old “default to buy” assumption increasingly difficult to justify without running the numbers.

The leaders who make the best decisions in this environment are the ones who actively recalibrate: who can run a real TCO comparison that includes hidden costs, who understand what makes a capability differentiating versus commoditised, who have built the internal capability to evaluate and prototype, and who know the limits of AI-assisted development well enough to put appropriate quality controls around it.

There are no tidy answers here. There were never tidy answers. But the assumptions underneath the decision have shifted, and leaders who are still operating on the old assumptions are making choices on a map that no longer matches the terrain.